Can you use AI for alt text?

AI seems to get better every day. Should we drop the alt attribute and let AI describe everything?

Reading time: 6–10 minutes

Artificial intelligence (AI) has come a long way in recent years. For more than a decade it's been able to identify objects out of pictures. When lots of people forget to add descriptions to their images it's time to ask - is it time to let the robots take over?

Background and caveats

I did the original groundwork for this post in 2021, as part of a thesis, entitled Will AI replace the alt attribute?. This post summarizes the interesting findings, without this reading like a research paper.

I won't be explaining alt text here. If you're new to accessibility, check this WebAIM article on alternative text to get up to speed. I'll use both 'alt text' and 'image description' to mean the same thing in this post.

The project involved using 50 images to represent how images might be used on the web. I then tested how some real-life image description tools performed. This post includes a few specific examples, but the full results are available too.

No sample of 50 images could ever represent every possible use of every possible image. There are categories which, if included, might change the results.

There are categories which, if included, would change the results. For example, sometimes people use images of stylized text. but extracting text is the type of thing modern AI can do very reliably. These weren't included because in most contexts, it's better to use real text.

I don't depend on image descriptions in my everyday life. When I comment on what seems better or worse, this is from accessibility experience.

I've labelled some links "via ACM". Unless you're an ACM member, or have access to an academic library, you might hit a paywall. You may be able to ask the author for help if there's something you especially want to read.

Where we are now

When I started researching this, I started with academic literature and found a few publications. Quite a lot of people have tried this idea, some dating as far back as 2015. Additionally, some people provide do-it-yourself instructions using a cloud service.

My work focused on a three specific cases, which I'll look at in a bit more detail now;

Facebook generates a description for each uploaded picture. They seem to do this right after you upload it, and they are available to all users who see the picture. At the time of publication there wasn't a possibility for the uploader to add a description of their own, but this seems to have been added now.

Facebook's publication was all the way back in 2017. It's clear that their approach has improved since then. Their model could, at the time of publication, identify about 100 common objects or themes. These objects and themes were chosen based on a sample of uploaded Facebook photos.

Generating the descriptions was pretty simple. The model tells us the percentage chance that each of these topics is present, if any are over 80%, they'll be included after the text "May be an image of". It now includes support for images of text and correctly describes categories of images, like "comic strip" and "meme".

For example, this snowscape gets the description "May be an image of nature, sky and tree".

The most surprising thing for me when I read the original Facebook paper (via ACM) was how much users liked it. If a friend proposed this description of the image, I'd suggest it's not good enough.

In Facebook's field study they A/B tested an early version of these descriptions with users who used a screen reader inside the Facebook app. 70% of users in the control group (no descriptions) found it "extremely hard" to tell what images were about, this fell to below 40% in the test group. More than 20% calling it "somewhat easy", up from about 0%. I suggest reading the full paper if you can, it's a well-done piece of research.

Twitter a11y Chrome Plugin

This browser plugin was created as part of a research project from Carnegie Mellon University and others (via ACM). It brings together a whole bunch of methods, including:

- following any URLs in the tweet to try and find a copy of the image with an intact description,

- attempting to recognise any text in the image and detecting screenshots of tweets.

- object detection , very similar to that described above,

- finding a matching image from anywhere on the web and copy the description. This was only ever experimental, but it should give an idea of what an optimal solution might look like. There's an ensemble of specialised methods, which work together to describe lots of types of images.

One nice touch is that every description generated includes a source. Users might be able to build a mental model of which sources are more reliable than others. The research paper also includes method-by-method usefulness ratings gathered from users.

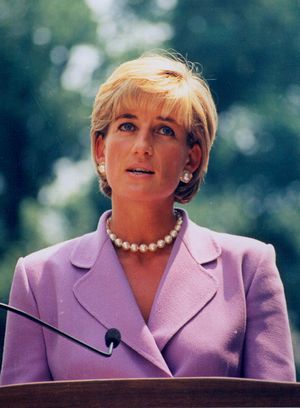

For example, this image gets "From automatic captioning: Diana, princess of wales wearing a suit and tie".

Yeah, there's no tie, I guess the model's "suit and tie" would be better labelled as "dressed formally". This was the only time a picture of a public figure got an acceptable level of description in the whole set.

AccessiBe

I'm not very positive about the role of accessibility overlays. Even without the often misleading marketing, they feel misplaced. If users needed changes like they make, surely the users' browser is the right place to provide them?

Despite that, I compared the performance of the automatic alt text features in Accessibe on the same set of images. The company provide almost no information on how it works. At the time of writing, the website says the widget will "provide accurate and elaborate alternative text" when it is missing.

One important note is that it also appears to add role="presentation" whenever it fails to describe an image. This is bad. This communicates to assistive technology users that the image has no meaningful content. It also prevents automated accessibility checkers spotting the missing description. All of the test images were non-decorative, but this happened quite a few times.

A few images got reasonable descriptions. This stock photo of pancakes got "KEEP A FRON. Pancakes with black berries on white ceramic plate beside clear drinking glass". It picked up the "Keep away from fire" label on the napkin, which became "KEEP A FRON", but the description was the most thorough for this image.

How it's going

The full results from the side-by-side tests look like this.

| Platform | Acceptable | Some Content | Missing | Misleading |

|---|---|---|---|---|

| 6 | 19 | 16 | 9 | |

| Twitter a11y | 8 | 2 | 26 | 14 |

| AccessiBe | 4 | 24 | 16 | 6 |

So every approach available at the moment produces some acceptable results, but every approach returns more misleading results.

The results vary a bit by category too. Each category of images had five examples, but no tool correctly described more than two in any one category. For example, Facebook did a reasonable job ("some content" or "acceptable") with all memes and four out of five personal photographs. That probably reflects the fact that those are most of what people are sharing on Facebook, so that's what their custom model is tuned for.

Except for the Diana, Princess of Wales example, by Twitter a11y, no public figures were recognised at all by any tool.

Pictures of identity documents were inspired by a post where our local police announced a new design for identity cards. Facebook always described them misleadingly. It's likely that Facebook's custom model didn't include any identity documents. It was trained only using public Facebook photos and it's a bad idea to post pictures of real identity documents on social media.

Facebook often picked out the photograph on the document and said that the image was of a person. In the other extreme, AccessiBe sometimes included word from the document. This gives a long description and failed to mention that the text appeared on a document.

No animated GIF, button, icon or infographic got a good automated description with any tool.

Logos which include a wordmark were usually correctly described - AI has been good at recognising text for a long time. Those with no wordmark were usually not. Even the Olympic rings, one of the most recognisable symbols in the world, were not adequately described anywhere.

There are probably lots more insights to gleam from the original results.

So, should you use it?

Probably not.

If you have a website of your own, or you work on a website or app where you can require people write image descriptions, and you can educate them if they don't get it right, this is a much better option.

This is the case if you have a blog, a business website or an online store. Learning to write good descriptions and helping your colleagues to do the same isn't much work. Your users who interact them will get a much better experience this way.

But what if you are Facebook or something a similar size. Your users have had a decade of uploading images without an extra step of describing them. You don't have a good way to manage the quality of the descriptions users type.

Generally, as an analysis of images on Twitter (open access) found, when describing images is optional, people describe a tiny proportion of images (less than 0.1%). When they do write a description, it's often good, but it's still a tiny proportion.

For their particular problem, Facebook's approach of a well-tuned model for the types of images used seems reasonable. It's clearly better than no descriptions, but nowhere near as good as well-written descriptions by humans.

But you're probably not just like Facebook, so this solution is probably not the right one for you. Keep writing your own descriptions